WHO WAS LIABLE TO TESLA CRASH?

Topic: WHO WAS LIABLE TO TESLA CRASH?

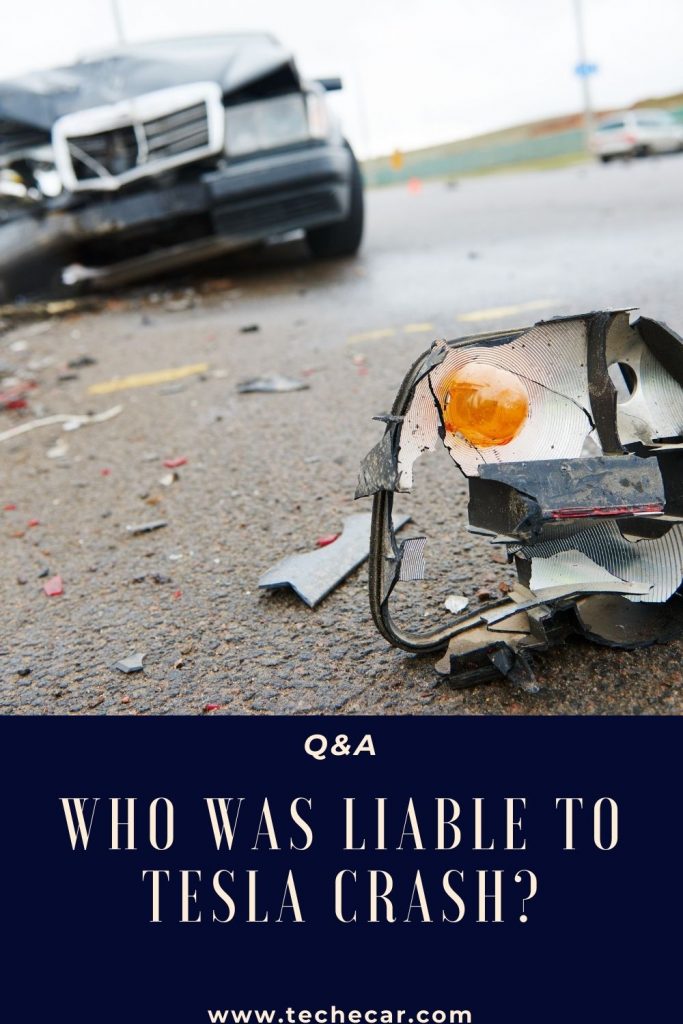

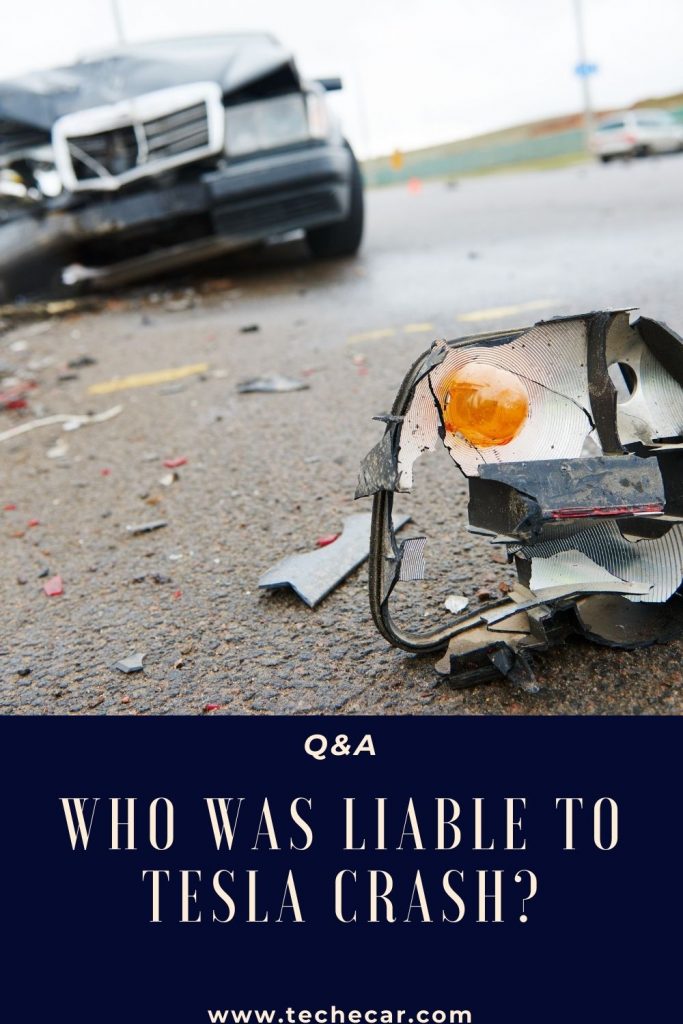

On Saturday, two men, aged 59 and 69, were killed in a Tesla Model S under somewhat strange circumstances. Was Autopilot, a technology that some compare to autonomous driving, activated at the time of the collision? The hypothesis is put forward because it appears that the driver’s seat was unoccupied when the car violently struck a tree. But it is already refuted by Elon Musk, who, as usual, took to Twitter to defend the interests of his company.

AN ACCIDENT THAT DESERVES AN INVESTIGATION

In response to an Internet user who felt that Autopilot could not be active (due to the security measures it entails), Elon Musk allowed himself to support: “Your research, as an individual, is better Than those of the professionals of the Wall Street Journal. The data collected for the moment show that Autopilot was not active and that this car did not have the ‘Fully autonomous driving capability’ option. Also, the standard Autopilot needs ground markings to be activated, which this street did not have. “

It’s hard to give full credit to Elon Musk, who logically wants to defend his Autopilot against criticism – as he already did in 2018 following a previous fatal crash. Only the investigation conducted by the NTSB, the National Transportation Safety Board in the United States, will shed light on this accident.

It is indeed complicated to determine, for the time being, the causes of this accident, regardless of the degree of Autopilot involvement. Normally, this technology is supposed to disengage if no one is seated in the driver’s position (a weight detector signals this). It no longer detects the hands on the steering wheel or if the lane is no longer identifiable. These three criteria were, it seems, not met when the Model S left the road. Therefore, the preliminary elements go, for the moment, rather in the direction of the statements of Elon Musk, but the investigation is only beginning.

Note that there are ways to trick artificial intelligence. It is, for example, possible to put a heavy object on the seat to make it believe that there is someone on it. And tools make it possible to simulate the placing of the hands-on steering wheel. These techniques may have been used by one of the victims to push the technology to its limits. The Autopilot, which remains a laboratory, can also experience flaws and overcome some of its limitations – hence the need to be constantly vigilant. The NTSB investigation will therefore be decisive in determining the exact circumstances of the accident.

“Nobody was driving”: a fatal crash in Tesla reminds us that autonomous driving does not exist.

Two men were killed in a Tesla Model S. There was no one behind the wheel at the time of the crash.

This is yet another news item linked to Tesla that shivers down your spine: as reported in an article in the New York Times on April 18, two men lost their lives aboard a Model S on Saturday night in Texas. According to the first elements of the investigation, no one was behind the wheel at the time of the crash. Everything suggests that the two victims wanted to push the Autopilot technology a little too far, which allows the car to drive alone in very favorable conditions.

Constable Mark Herman said one of the men was seated in the front but on the passenger side, while the other was seated in the rear. If nothing confirms that the Autopilot was activated at the time of the facts, the duo would have discussed the technology before leaving for their tragic trip. Chance of the calendar: in a tweet published the same day, Elon Musk praised the effectiveness of Autopilot according to a new study published by Tesla: when it is activated, the super assistance will reduce the risk of accidents (near 10 times less risk).

ANOTHER PROOF THAT TESLA ARE NOT SELF-DRIVING CARS

Tesla is not yet 100% autonomous cars. And this accident, which unfortunately cost the lives of two men, is further proof. The 2019 Tesla Model S crashed into a tree at very high speed after missing a turn in a residential area in the Woodlands. The car immediately caught fire, and the fire department took a long time to put it out. “It took four hours to put out a fire that normally can be put out in a matter of minutes,” Herman said.

Several elements tend to prove that the two men took reckless risks. First, there is the location: a residential area a priori located near a forest does not seem to be the ideal place to test the Autopilot, today designed to offer fairly safe semi-autonomous driving on fast lanes (easier to manage for artificial intelligence). Numerama was able to verify this during an extended test drive of the Model 3.

There is also this assumption that no one was behind the wheel at the time of the accident. Tesla never ceases to remind people that Autopilot, while it greatly facilitates the driving experience, requires constant vigilance – as if you were driving normally. The online configurator reads: “The current functions require active monitoring by the driver and do not make the vehicle autonomous. “

While Tesla can be accused of going a little too far in developing technologies that could endanger users’ lives (and cause confusion in people’s minds ), the owners remain responsible for their use under the conditions that apply. lend to it. In the past, we have seen examples showing that people can do anything with Autopilot, fortunately with less tragic consequences.

Recommended Articles:

What is the fastest electric car?

Why should i buy an electric car?

The New Tesla Model 3 Increases Its Autonomy to 614 km

[…] WHO WAS LIABLE TO TESLA CRASH? […]

[…] WHO WAS LIABLE TO TESLA CRASH? […]